A Guide on Chain-of-Thought (CoT) Prompting

In the world of AI, particularly in natural language processing (NLP), how a model be prompted can dramatically change its performance. Standard prompting, where a model is simply asked to generate an answer, has been the dominant approach for a long time.

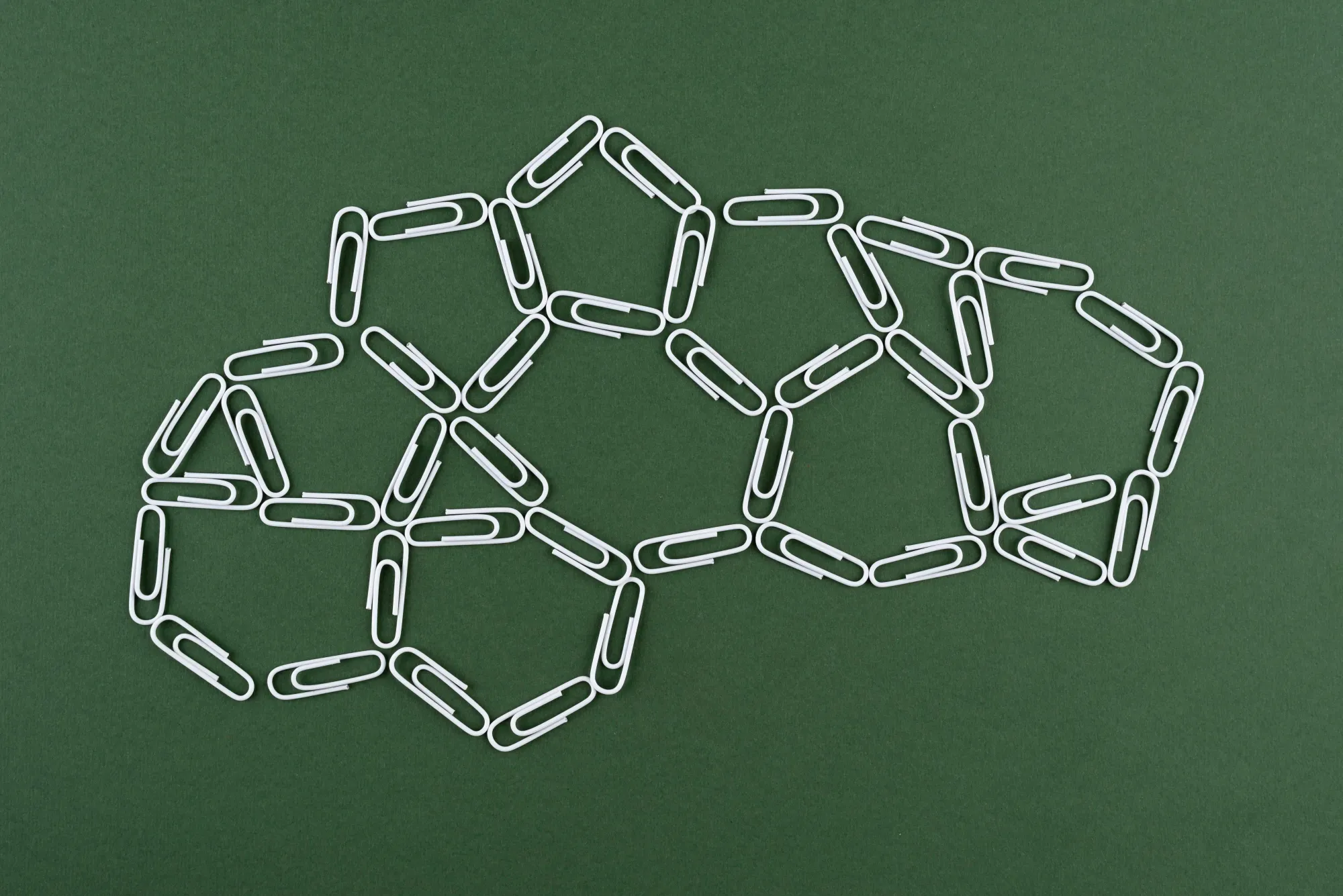

But when it comes to tasks requiring reasoning or complex problem-solving, Chain-of-Thought (CoT) prompting has emerged as a superior alternative. CoT involves guiding the model to think step-by-step, much like how humans approach multi-step problems.

This blog will explore the key differences between standard prompting and CoT, dive into different types of CoT prompting, and weigh the advantages and disadvantages of this innovative technique.

Standard Prompting vs. Chain-of-Thought Prompting

Standard Prompting is a straightforward approach where a model is provided with a question or problem, and it directly generates an answer. While this is effective for simple queries, it falters in tasks that require reasoning or multiple steps to solve.

For example, imagine asking a model a simple math problem with few shot prompting:

Prompt:

Q: Roger has 5 tennis balls. He buys 2 more cans of tennis balls. Each can has 3 tennis balls. How many tennis balls does he have now?

A: The answer is 11.

Q: The cafeteria had 23 apples. If they used 20 to make lunch and bought 6 more, how many apples do they have?

Output:

A: The answer is 27.

This is incorrect. The model fails to reason through the math, leading to an error.

Chain-of-Thought Prompting breaks down the problem into smaller, interpretable steps, allowing the model to reason through the task. Using the same example:

Prompt:

Q: Roger has 5 tennis balls. He buys 2 more cans of tennis balls. Each can has 3 tennis balls. How many tennis balls does he have now?

A: Roger started with 5 balls. 2 cans of 3 tennis balls each is 6 tennis balls. 5 + 6 = 11. The answer is 11.

Q: The cafeteria had 23 apples. If they used 20 to make lunch and bought 6 more, how many apples do they have?

Output:

A: The cafeteria had 23 apples originally. They used 20 to make lunch. So they had 23 - 20 = 3. They bought 6 more apples, so they have 3 + 6 = 9. The answer is 9.

The structured thinking embedded within CoT allows the model to handle problems requiring logic, arithmetic, or causal reasoning far more effectively than standard methods

Suggested Reads- What is Zero-shot vs. Few-shot Prompting?

Types of Chain-of-Thought (CoT) Prompting

1. Zero-Shot CoT Prompting: Instead of manually crafting detailed examples, a model is given a simple instruction like “Let’s think step by step” after the question. This approach can work without task-specific demonstrations, making it easier to implement but potentially less accurate.

Example:

Prompt: Let's think step by step. What is 17 divided by 2?

Model Output: To solve 17 divided by 2, first, 2 goes into 17 eight times because 2 × 8 = 16. The remainder is 1, so the answer is 8.5.

2. Auto-CoT Prompting: It is a technique designed to automate the process of generating reasoning chains in language models. Instead of manually crafting demonstrations (as in Manual-CoT), Auto-CoT uses a method to automatically generate diverse reasoning chains, which reduces the need for manual effort.

Example of Auto-CoT Prompting:

From the list of questions let’s sample this question.

Question: A chef needs to cook 15 potatoes. He has already cooked 8. If each potato takes 9 minutes to cook, how long will it take him to cook the rest?

Generated Reasoning (Auto-CoT):

"Let's think step by step. The chef has already cooked 8 potatoes. That means there are 7 potatoes left to cook. Each potato takes 9 minutes. So, it will take 9 × 7 = 63 minutes to cook the remaining potatoes. The answer is 63."

By taking the Generated Reasoning as an example test question can be answered with reasoning.

How Auto-CoT Works:

- Clustering: It first clusters questions into different groups based on semantic similarity.

- Sampling Demonstrations: It then samples representative questions from each cluster, generating reasoning chains using Zero-Shot-CoT (by adding "Let's think step by step" prompts).

- Diversity-Based Sampling: The approach ensures diversity in the sampled questions, reducing the risk of replicating mistakes in similar questions.

This method consistently matches or exceeds the performance of manual methods by leveraging the automated generation of reasoning steps and diversity in sampling

Advantages of Chain-of-Thought Prompting

- Improved Reasoning Capabilities: CoT prompting is designed to elicit logical steps from the model, making it far superior for tasks requiring multi-step reasoning. Whether it's math problems, commonsense reasoning, or symbolic manipulation, CoT models can break problems into smaller, manageable parts, much like human thought processes.

- Enhanced Model Interpretability: The step-by-step approach not only helps models perform better but also makes their thought process interpretable. By seeing how a model arrives at an answer, users can understand its reasoning and pinpoint where errors occur, if any.

- Task Versatility: CoT prompting isn't limited to specific tasks. It has proven useful across arithmetic reasoning, commonsense problem-solving, and even symbolic reasoning, making it a highly adaptable technique.

- Reduction in Data Requirements: Since CoT prompting uses few-shot or zero-shot methods, it doesn’t require extensive training data for each specific task. A single language model can handle multiple tasks simply by adjusting the prompt, saving time and resources.

Disadvantages of Chain-of-Thought Prompting

- Dependence on Model Scale: The effectiveness of CoT prompting significantly depends on the size of the language model. Smaller models often fail to generate coherent reasoning chains, which limits the utility of CoT for all but the largest models. For example, CoT prompting begins to show tangible benefits only with models containing over 100 billion parameters.

- Risk of Error Propagation: While breaking down a problem into steps is generally beneficial, any mistakes made in one step can propagate through the rest of the reasoning chain, leading to incorrect final answers. This can be particularly problematic in Zero-Shot CoT, where reasoning chains are generated automatically and can sometimes include flawed logic.

- Computational Cost: Since CoT requires generating intermediate steps before reaching an answer, it increases the number of computations a model must perform, making it more resource-intensive compared to standard prompting.

Conclusion

Chain-of-Thought prompting is a game-changer for AI models, especially in tasks requiring complex reasoning. Whether through manually curated examples, automated demonstrations, or simple zero-shot prompts, CoT allows models to break down difficult problems in a more human-like fashion. Though it requires large-scale models and incurs higher computational costs, the benefits of reasoning ability and interpretability make it a valuable tool in the evolving landscape of AI.

For businesses and developers working with NLP systems, CoT represents a step towards more intelligent, capable, and explainable AI.

Frequently Asked Questions?

What is Chain-of-Thought (CoT) Prompting?

CoT prompting is a technique that guides AI models to break down complex problems into smaller, interpretable steps, similar to human reasoning, leading to more accurate and logical responses.

What are the different types of Chain-of-Thought Prompting?

There are three main types: Standard CoT with manual examples, Zero-Shot CoT using simple step-by-step instructions, and Auto-CoT which automatically generates reasoning chains.

What are the main benefits of using CoT Prompting?

CoT prompting improves reasoning capabilities, enhances model interpretability, works across various tasks, and reduces the need for extensive task-specific training data.